One advantage of using Bayes factors (or any other form of Bayesian analysis) is the ability to engage in optional stopping. That is, one can collect some data, perform the critical Bayesian test, and stop data collection once a pre-defined criterion has been obtained (e.g., until “strong” evidence has been found in support of one hypothesis over another). If the criterion has not been met, one can resume data collection until it has.

Optional stopping is problematic when using p-values, but is “No problem for Bayesians!” (Rouder, 2014; see also this blog post from Kruschke). A recent paper in press at Psychological Methods (Shoenbrodt et al., in press) shows you how to use optional stopping with Bayes factors—so-called “sequential Bayes factors” (SBF).

The figure below a recent set of data from my lab where we used SBF for optional stopping. Following the advice of the SBF paper, we set our stopping criteria as N > 20 and BF10 > 6 (evidence in favour of the alternative) or BF10 < 1/6 (evidence in favour of the null); these criteria are shown as horizontal dashed lines. The circles represent the progression of the Bayes factor (shown in log scale) as the sample size increased. We stopped at 76 participants, with “strong” evidence supporting the alternative hypothesis.

One thing troubled me when I was writing these results up: I could well imagine a skeptical reviewer noticing that the Bayes factor seemed to be in support of the null between sample sizes of 30 and 40, although the criterion for stopping was not reached. A reviewer might wonder, therefore, whether had some of the later participants who showed no effect (see e.g., participants 54–59) been recruited earlier, would we have stopped data collection and found evidence in favour of the null? Put another way, how robust was our final result to the order in which our sample were recruited to the experiment?

Assessing the Effect of Recruitment Order

I was not aware of any formal way to assess this question, so for the purposes of the paper I was writing I knocked up a quick simulation. I randomised the recruitment order of our sample, and plotted the sequential Bayes factor for this “new” recruitment order. I performed this 50 times, plotting the trajectory of the SBF. I reasoned that if our conclusion was robust against recruitment order, the stopping rule in favour of the null should be rarely (if ever) met. The results of this simulation are below. As can be seen, the stopping criterion for the null was never met, suggesting our results were robust to recruitment order.

Recruitment Order Simulation

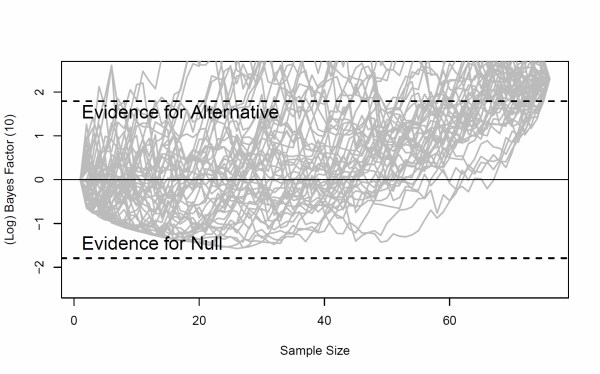

Then, I became curious: How robust are SBFs to recruitment order when we know what the true effect is? I simulated a set of 100 participants for an effect known to be small (d = 0.3), and plotted the SBF as “participants” were recruited. I tried different random seeds until I found a pattern I was interested in: I wanted a set of data where the SBF comes very close to the stopping rule in favour of the null, even though we know the alternative is true. It took me a few tries, but eventually I got the plot below.

Note the dip toward the null criterion is even more extreme than in my experiment. Thus, this set of data seemed perfect to test my method for assessing the robustness of the final conclusion (“strong evidence for alternative”) against recruitment order. I followed the same procedure as before, simulating 50 random recruitment orders, and plotted the results below.

The null criterion is only met in one simulated “experiment”. This seems to be very pleasing, at least for the paper I am due to submit soon: the SBF (at least in these two small examples) seem robust against recruitment order.

R Code

If you would like to see the R code for the above plots, then you can download the html file for this post which includes R code.

Very nice post! What you compute is close to something what Taper and Lele (2011) call the “local reliability of the evidence”, which is the distribution of the strength of evidence under hypothetical repetitions (or, in your case: hypothetical participant orders) of the experiment (with the data at hand; i.e., it is a post-data reliability).

The difference is that they do bootstrapping (i.e., with replacement) and you did resampling without replacement.

In any way, I think your simulations convincingly show that a different participant order would not have changed your conclusions. Good luck with the submission!

Taper, M. L., & Lele, S. R. (2011). Evidence, evidence functions, and error probabilities. In M. R. Forster & P. S. Bandyophadhyay (Eds.), Handbook for Philosophy of Statistics. Elsevier Academic Press. Retrieved from http://www.cimat.mx/~ponciano/TCR/TaperLeleEvidence.pdf

Thanks Felix; I would have been very surprised if what I did hadn’t been done before – most of what I think of has always been done before 🙂 Thanks for the link, I will check their paper out. Is it better to bootstrap than resample, or are they just two different ways to skin the same cat for Bayes factors?

I’d say: *with* replacement you treat your sample as a population and ask what happens if you collect hypothetical new samples which are like your actual sample. This will give you more variability in the trajectories compared to resampling without replacement.

E.g., all of your permutations will end up at the same BF with n_max. With replacement, you will have some variability at n_max.